Code the

Edge.

Deploy to Jetson.

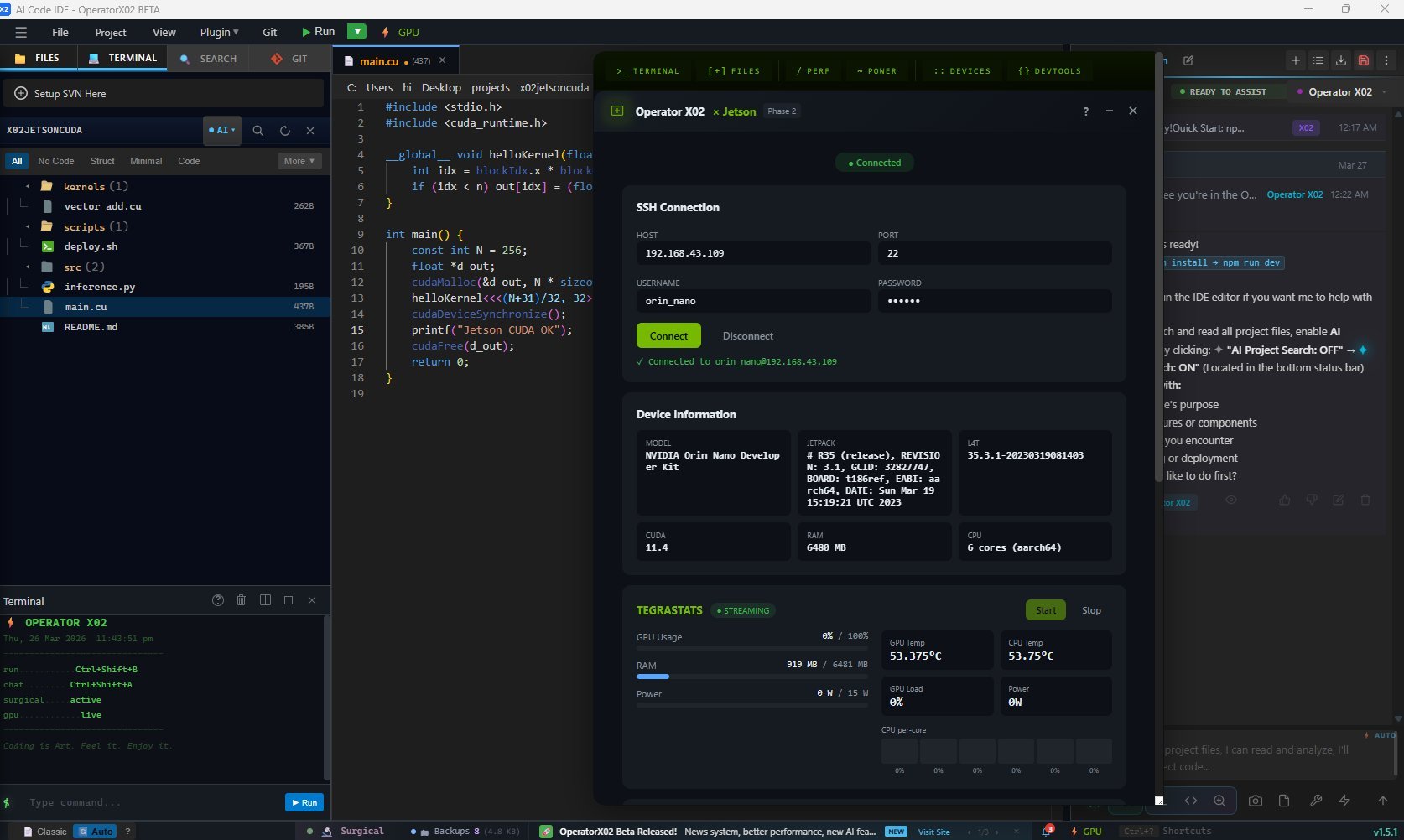

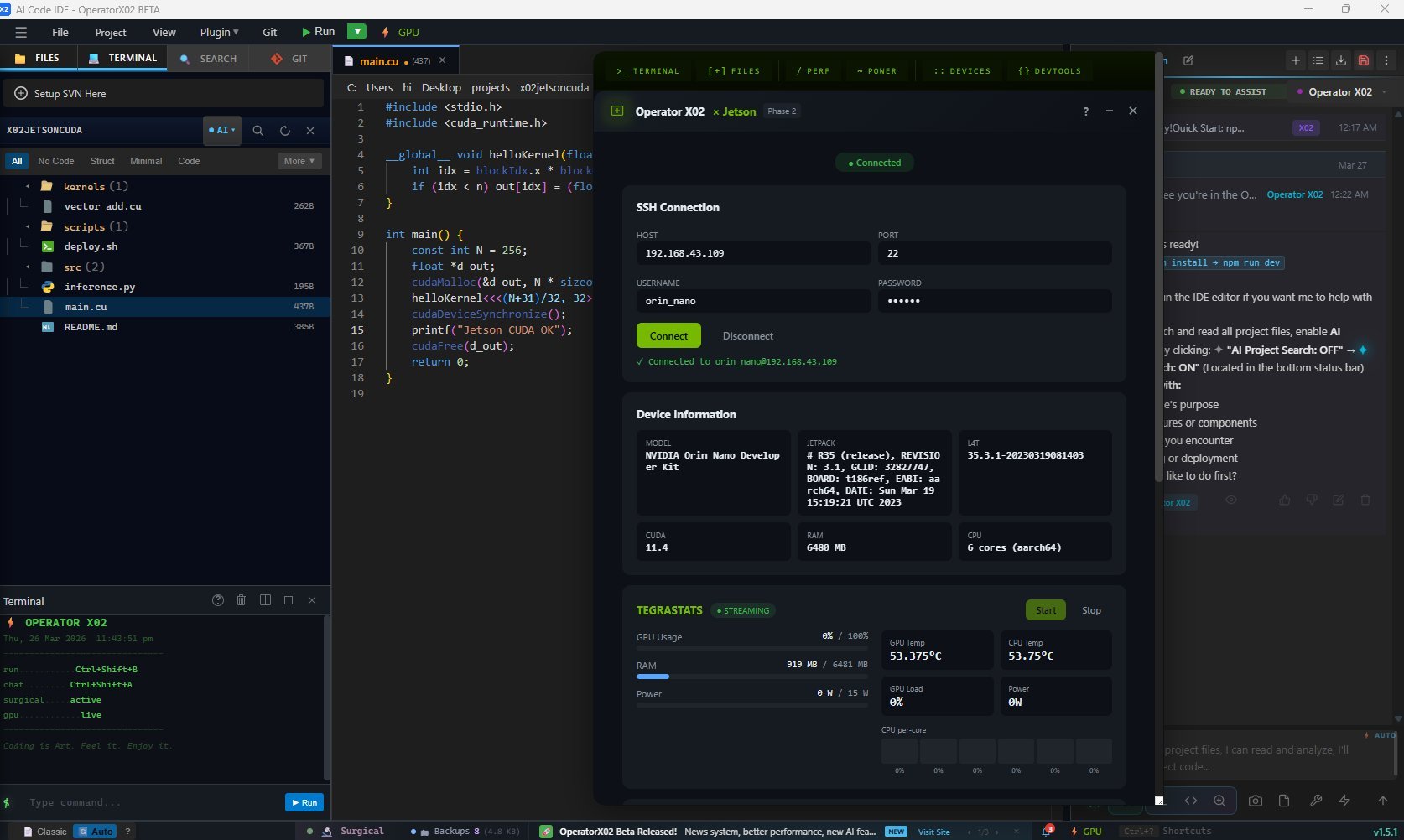

Operator X02 now speaks NVIDIA. SSH into your Jetson Orin, watch GPU telemetry in real-time, deploy CUDA kernels, and get AI-assisted edge computing — all without leaving your IDE.

Operator X02 now speaks NVIDIA. SSH into your Jetson Orin, watch GPU telemetry in real-time, deploy CUDA kernels, and get AI-assisted edge computing — all without leaving your IDE.

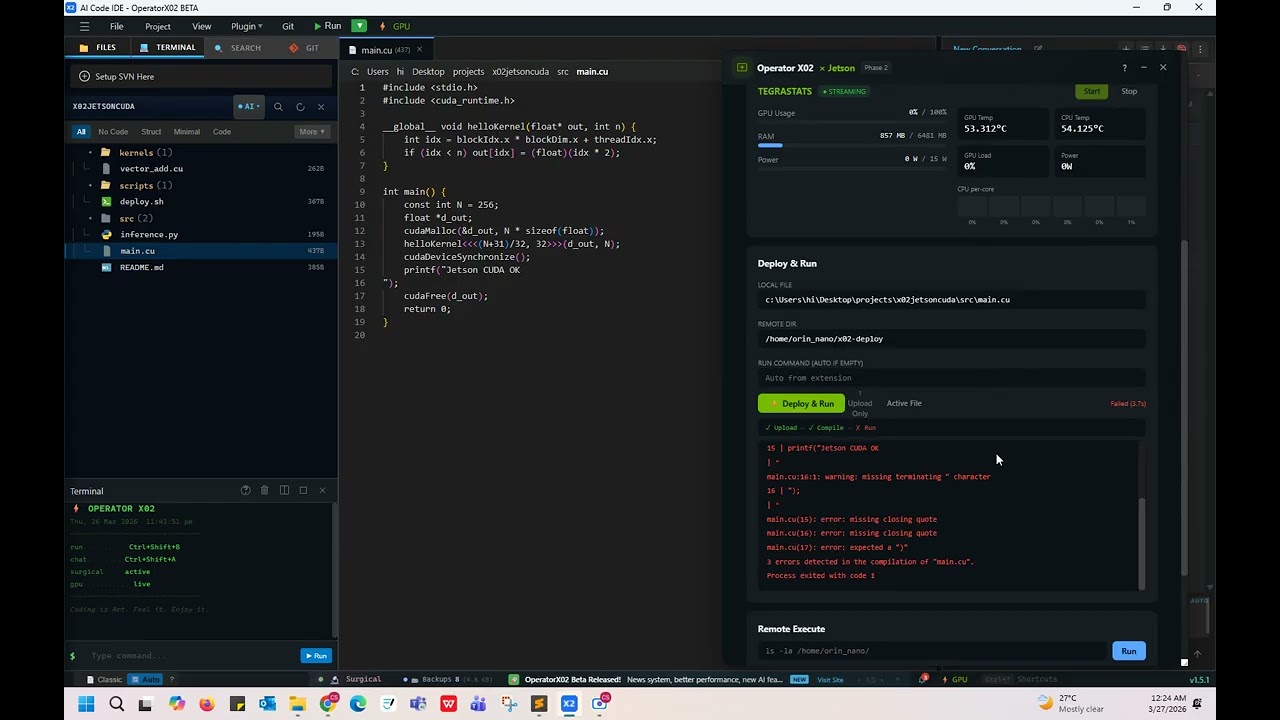

From SSH connection to CUDA deployment — every step of the edge-AI development loop lives inside X02. No terminal juggling. No context switching.

russh 0.44 — a pure-Rust async SSH implementation. This eliminates the vendored OpenSSL compile bottleneck that caused extremely slow first builds on fresh installs. Cold build time is dramatically reduced.

tegrastats. Visual gauges update in real-time with configurable poll intervals..cu and .py files directly from the editor to your Jetson via SSH. The panel triggers nvcc compilation on-device and streams build output back to X02 in real time.__global__, __device__, thread indexing, and memory intrinsics — with colour-coded feedback.Watch X02 connect to a real Jetson Orin Nano, stream live tegrastats telemetry, and deploy a CUDA file — all from inside the IDE.

Developing for NVIDIA Jetson used to mean juggling five different tools. V1.5 collapses the entire workflow into one IDE.

ssh nvidia@192.168.x.xtegrastats in a separate terminal window, watch scrolling textcat /etc/nv_tegra_release).cu / .py uploaded and compiled on-device, build log streams back__global__, warps, thread indexing colouredtegrastats data from a real Jetson Orin Nano — GPU utilization, thermal readings, and power consumption rendered as live gauges inside the IDE.

NVIDIA Jetson support ships in structured phases. Phase 1 and Phase 2 are live in V1.5. Phase 3 focuses on AI-accelerated edge inference from within the IDE.

normStats()) — handles all Rust backend naming conventions reliablyOperator X02 V1.5 is free and open-source under MIT. Bring your own API keys. No login, no telemetry, no subscription. Just connect your Jetson and code.